Most DJs are still arguing about whether AI belongs in music. Meanwhile — the clubs already moved. Not metaphorically. Literally. The physical infrastructure of how spaces sound, how they breathe, how they respond to 400 sweaty bodies at 1am — all of it is being quietly rebuilt. And if you’re not paying attention, you’ll walk into venues that were designed without you in mind.

Why This Is Happening Faster Than Anyone Admits

There’s this moment I keep thinking about. I was doing a warm-up set at a venue in late 2023 — mid-sized room, about 600 capacity — and the monitors sounded different in the second hour than they did at soundcheck. Not broken. Just… different. Tighter. I asked the sound engineer and he mentioned, almost casually, that the room’s processing system auto-adjusts based on occupancy. I didn’t fully understand what that meant then.

I do now.

Venues in Berlin, Tokyo, London — and honestly more places than get reported — are already running adaptive audio systems, generative lighting rigs, crowd-response tech. This isn’t a pilot program or a festival gimmick. It’s becoming standard venue infrastructure for mid-to-large capacity clubs. The question stopped being if clubs will change about two years ago. Now it’s: how fast, and who’s steering it.

The Room That Listens Back

Acoustics Aren’t Static Anymore

Traditional club design treats acoustics like architecture — build it, tune it, done. Fixed. AI-driven systems (some built on spatial audio frameworks originally developed for gaming and VR environments, weirdly enough) can now adjust EQ, reverb tail length, and delay in real time. Based on crowd density. Based on temperature. Based on how much the room is absorbing versus reflecting at any given moment.

Which means — and this matters — a track that absolutely lands at 2am might hit completely differently at midnight when the floor is half-empty. The room isn’t neutral anymore. It has a state.

For DJs, this fundamentally changes the prep calculus. You’re not just reading a static room. You’re reading a room that’s also reading itself.

⚠️ COMMON MISTAKE: Assuming the monitor mix you heard at soundcheck will hold all night. In AI-integrated venues, it won’t — and that’s by design, not a malfunction.

Lighting That Follows Your Architecture (Sort Of)

Static lighting rigs are — slowly, but genuinely — being replaced by systems that analyze BPM, frequency output, and energy spikes to generate synchronized visual environments. Tools built on frameworks similar to TouchDesigner, layered with ML audio analysis, can map the structural shape of a set in real time.

Here’s the thing though. It’s not perfect. The systems are reactive, not predictive. They respond to what already happened in the last 4 bars — not what you’re about to do. There’s a latency in the intelligence, if that makes sense. Which actually creates an interesting creative opportunity if you know how to use it. More on that below.

The Data Layer. This Is The Part Most People Skip.

Okay so — underneath all the visual and audio tech — there’s a data infrastructure that almost nobody in the DJ world is talking about seriously. And it’s the part that will have the most long-term impact on how DJs get booked, programmed, and evaluated.

Modern venues are collecting: crowd movement via thermal imaging, dwell time by zone, peak density windows, drink purchase velocity correlated to set energy — yes, really — and audience response metrics that get fed back into booking analytics. Some clubs in Europe have been doing versions of this since 2022.

This is the part that should make you stop.

The most valuable DJ in 2026 isn’t the one with the best ears. It’s the one who understands what the room already knows about itself — and mixes accordingly.

That data will shape what music gets programmed. At what tempo. During which hour. It’ll influence residency decisions. It might already be doing that and nobody told you.

DJs who understand even the basics of this data layer — who can speak intelligently about energy arc management, crowd retention, set pacing as a measurable output — will have a structural edge that has nothing to do with technical mixing ability.

💡 PRO TIP: Start learning basic signal chain concepts now. How your audio output interacts with DMX lighting systems, how MIDI-triggered visual rigs work at the input level. You don’t need to become a technician. You need enough vocabulary to collaborate intelligently with the people building these systems.

What Actually Changes for Dance Culture

The Floor Becomes Programmable. Kind Of.

Here’s where I want to be careful — because this could sound dystopian if I phrase it wrong, and that’s not the point.

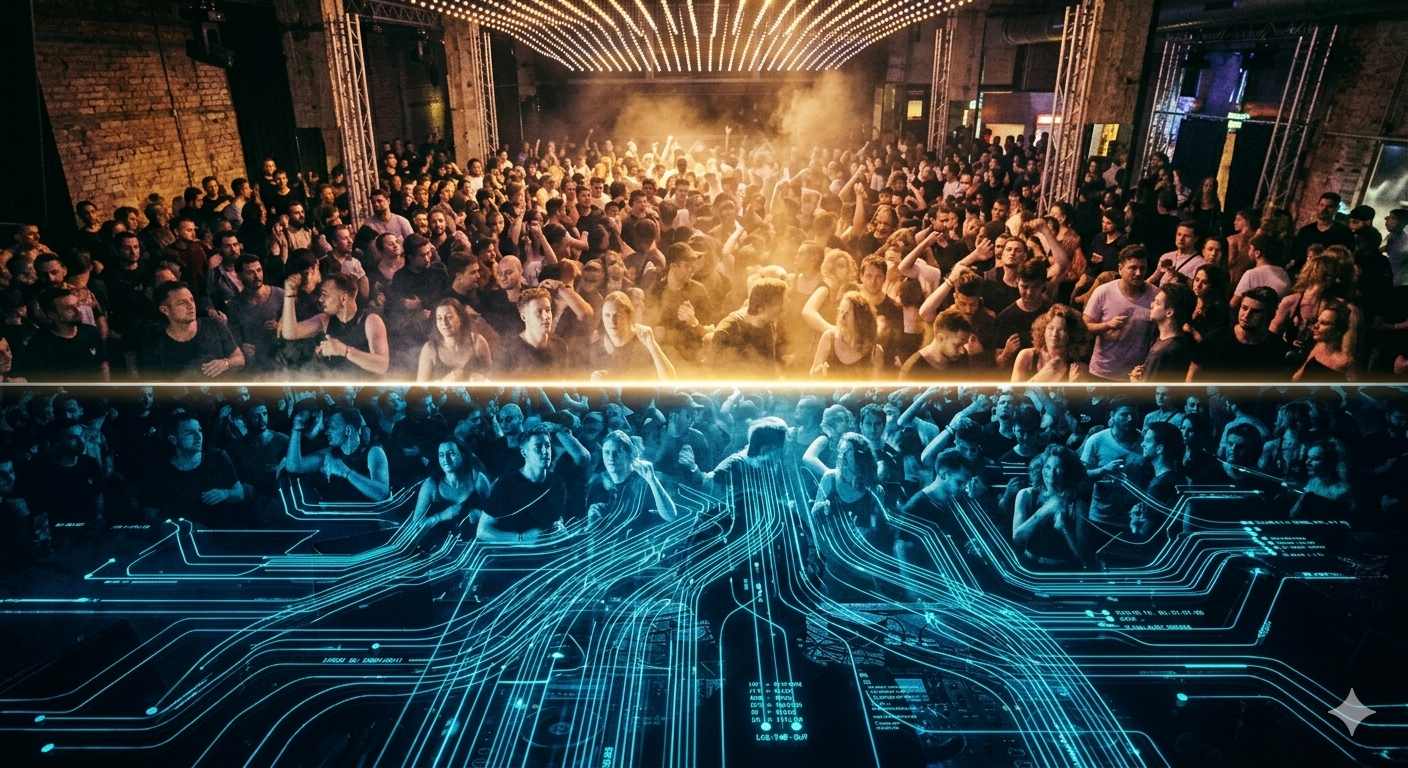

When lighting, sound, and spatial design are algorithmically synchronized, the experience architecture of a club night becomes intentional in a way it never was before. Energy peaks, emotional arcs, release points — stuff that good DJs have managed intuitively for decades — can now be modeled, anticipated, and partially designed into the room’s operating environment.

Partially. That’s the operative word.

Human energy is still chaotic. Still unpredictable. AI can model crowd behavior from data patterns but it cannot — genuinely cannot — account for the weird moment when a track hits a specific emotional nerve in a specific room on a specific night. That variable still belongs entirely to the DJ and the floor.

Hybrid Formats Are Already Here

AI-assisted event formats — part live performance, part generative environment — have moved from experimental to semi-regular in certain markets. A set where the visual world is partially co-generated by AI based on your track selections, in real time, as you mix. Clubs in Seoul, Amsterdam, and a few spots in LA have run formats like this.

The DJ in that setup isn’t a performer anymore, exactly. They’re more like — a creative director operating a multi-layered responsive system. Which honestly sounds more interesting than just standing behind a CDJ, if we’re being real about it.

Fyanso’s Take

Most DJs treat AI as a production tool. Something for the studio. Something that helps sort a library or write a bio. That’s the least interesting application — and I say that as someone who uses AI for exactly those things. The real structural shift is that AI is becoming the operating system of the venue itself. The room. The floor. The booking algorithm. DJs who only think about what’s happening inside the booth are — I don’t know how else to say this — optimizing the wrong variable. The operators who survive this transition are the ones who understand that the floor, the room, the data, and the system are all part of the same instrument. And they’re learning to play all of it.

🔧 TOOL: Resolume Arena + Essentia

Resolume Arena (v7 and above) lets you build audio-reactive visual layers that respond to specific frequency bands from your mix output. Pair it with Essentia — an open-source ML audio analysis library — and you have a basic generative visual rig you can run from a laptop.

Start here: map your kick drum’s frequency band to a single visual trigger. Get comfortable with the latency. Understand how the system responds — and where it lags. That understanding transfers directly to working inside professionally installed AI venue environments.

It takes about an hour to set up a basic version. The learning compounds quickly after that.

The System, Pulled Together

- AI is already deployed in venue acoustics, lighting, and crowd analytics — this is infrastructure, not concept, not prediction

- The room itself now has states — it responds differently across a night and DJs need to mix with that, not against it

- A data layer underneath modern venues is quietly influencing booking decisions and set programming — understanding it is a competitive edge

- Dance culture is shifting toward multi-sensory, partially programmable experiences — the DJ’s role is expanding in scope, not shrinking

- Hybrid live/generative formats exist now in Seoul, Amsterdam, LA — this is a present-tense reality, not a future forecast

- Basic signal chain literacy — DMX, MIDI, audio reactivity, spatial processing — is becoming a professional baseline

Your next move: Open Resolume Arena this week. Set up one audio-reactive layer synced to your mix output. Understand the latency. Build from there.