You’re still debating whether AI belongs in the booth. Honestly? That debate ended already. The DJs having that conversation in 2025 are the same ones who argued against digital DJing in 2009 — and we know how that played out.

This isn’t about hype. It’s about where the leverage is moving, and whether you’re positioned to catch it.

Why This Is Happening Faster Than You Think

Here’s the thing nobody really talks about — DJing AI isn’t arriving. It’s already in your workflow. Rekordbox’s intelligent track suggestions. Mixed In Key running harmonic analysis on 500 tracks in the time it used to take you to manually check 10. Serato Stems separating vocals in real time. These aren’t previews of the future. They’re the floor.

Between 2022 and 2024, something shifted. Generative audio crossed a quality threshold most people weren’t watching. Stem separation went from a studio luxury to a live performance tool. LLMs — yeah, tools like Claude and GPT-4 — started proving genuinely useful for creative workflow scaffolding, not just drafting emails. The infrastructure for AI-assisted DJing isn’t coming. It’s already unevenly distributed across the people reading this.

What’s next isn’t one big moment. It’s five compounding layers — and they build on each other.

The 5 Trends That Will Actually Matter

1. Real-Time Harmonic and Energy Analysis (This One’s Closer Than You Think)

Right now, Mixed In Key 10 analyzes your tracks before the gig. You prep, you tag, you load crates. Standard.

The next version of this runs during the set. Live monitoring of harmonic tension, energy arc, even crowd density data pulled from venue sensors or streaming engagement in real time. Pioneer’s DSP hardware has been creeping in this direction for two years — quietly, without much fanfare. Denon too. The gap between “pre-analyzed” and “analyzed in the moment” is already shrinking, faster than the marketing suggests.

What this means for your workflow right now: pre-analysis becomes the baseline, not the edge. The DJs who win aren’t the ones with the best-tagged library — they’re the ones who know how to read and respond to live feedback loops. That’s a different skill. Start practicing it.

2. AI Crate Building — But Only If Your Library Isn’t a Mess

Over 100,000 tracks hit streaming platforms every single day. I don’t say that to overwhelm you — I say it because manual filtering at that volume is genuinely impossible. Nobody has the hours. Nobody.

DJing AI tools built on recommendation engines trained specifically on DJ behavior (not listener behavior — huge difference) are going to become standard infrastructure. Not “here are songs you might like.” More like — suggest 12 tracks that transition cleanly from this outro, 128 BPM, A minor, high energy, no vocal chop drops, suited for a late-night warehouse context. That specificity is already achievable if you know how to prompt against structured metadata. Dedicated tools will package it into something accessible within the next 18 months.

Here’s the catch, though. And this is important — a disorganized library doesn’t get smarter just because the tool does. Garbage in, garbage out. It’s almost annoyingly simple.

Start now:

- Open Rekordbox or Serato

- Pick 50 tracks — your most-used ones

- Add structured tags: energy level (1–5), vocal presence (yes/no), set context (opener/peak/closer)

- Build the habit before you need the system

💡 PRO TIP: The DJs who’ll extract the most from AI crate tools are the ones who treated their library like a database before those tools existed. You’re building the foundation now, even if the house isn’t ready yet.

3. Generative Stems — The One Everyone’s Either Excited or Terrified About

Stem separation is real and it works. Moises, Lalal.ai, Serato Stems — you can pull an acappella from almost any track in under two minutes now. That part’s settled.

What’s coming next is weirder and more interesting. Generative stems. Not isolating what exists — generating new harmonic or rhythmic layers in real time that actually fit the key and tempo of whatever’s playing. Stable Audio, Suno, Udio — these are primitive, almost clunky early versions of that direction. But they’re pointing somewhere specific. The DJ-specific application — a live bassline fill, a custom transition element, an extended outro generated on the fly — is probably 2 to 3 years from being booth-stable.

When it lands, the boundary between DJ and producer in a live context essentially dissolves. That’s not alarming — it’s an expansion. But it does mean the DJs who understand arrangement and music theory, not just mixing mechanics, are going to have a structural edge. That gap is worth closing now, not later.

4. Performance Intelligence — Your Sets as Data

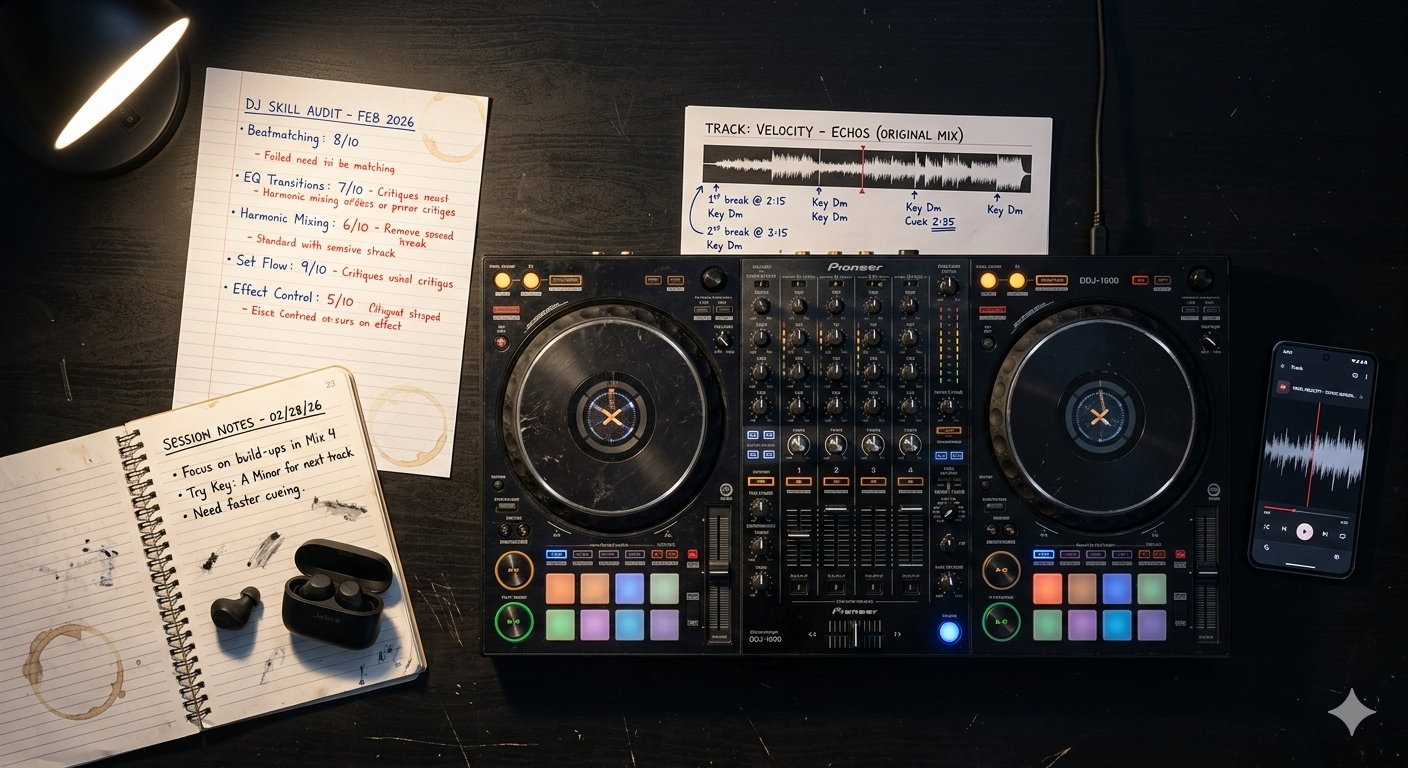

After a gig, what do you actually review? Maybe you listen back. Maybe you check a setlist scribbled on your phone. Most DJs — honestly, most — walk away with vibes and a vague sense of what worked.

That’s leaving a compounding feedback loop completely untouched.

Performance intelligence tools — and they’re being built right now, some in beta — will cross-reference your track selection, transition timing, energy curve, and crowd engagement data after every set. Then surface patterns. “Your energy dips consistently between 40 and 55 minutes. Here are four tracks that historically perform well in that window given your style.”

This isn’t science fiction. Sports has operated this way for over a decade — every pass, sprint, and tactical decision logged, analyzed, iterated. The same logic applied to live performance is just… obvious, once you see it.

Start building your data foundation before the tools catch up:

- Log every set — track name, BPM, key, approximate time in set

- Note transition type (blend, cut, FX drop)

- Flag moments that got a visible reaction — positive or negative

- Use a simple Notion table or even a Google Sheet

When smarter tools arrive, you’ll have something to feed them. Most DJs won’t.

⚠️ COMMON MISTAKE: Treating every gig as a standalone event. No logging, no pattern analysis, no iteration. You’re performing and discarding the data that could be compounding your improvement.

5. Content Production — The Output Layer Most DJs Are Ignoring

The performance is the source material. The content is where the career leverage increasingly lives.

I remember — back when I was doing 4-hour sets in venues that held maybe 200 people — thinking that if I just got good enough, the opportunities would find me. They didn’t. Not because the sets weren’t good. Because nobody outside that room ever saw them.

DJing AI tools for content are moving fast. Auto-generated highlight clips from long-form streams. Captions drafted in your brand voice. Waveform visualizers synced to peak moments. Music-reactive visuals generated without touching editing software. Tools like Opus Clip, Descript, and Gling are already doing versions of this — not DJ-specific yet, but the applications are obvious.

The DJ with a system for converting one 2-hour set into 15 platform-specific content pieces will out-distribute the technically superior DJ with no output infrastructure. Every time.

This isn’t about posting more content. It’s about building a machine that runs alongside your creative work — not instead of it. Those are different things.

Fyanso’s Take

Most DJs are going to use AI to accelerate the exact things they’re already doing wrong.

More tracks downloaded into a library with no tagging system. More content posted with no brand coherence. More prep time spent solving problems that shouldn’t exist in the first place. AI is an amplifier — it makes your existing workflow faster and louder. If that workflow is chaotic, you get faster chaos. Louder mess.

The DJs who actually win the next five years aren’t the early adopters of every shiny tool. They’re the ones who build a clean operational foundation first — then layer AI on top of something worth accelerating. Sequence matters here. Systems before software. In that exact order.

🔧 TOOL: Rekordbox 6 + ChatGPT — Export your full track library as a CSV from Rekordbox’s export function. Drop it into ChatGPT with this prompt: “Analyze this music library. Identify gaps in BPM distribution, key coverage, and energy range. Suggest 10 specific tracks to address each gap.” It won’t be perfect — the suggestions are only as good as your metadata — but it converts a 3-hour curation audit into roughly 20 minutes of structured output. That’s a real time delta, not a theoretical one.

The System Recap

- DJing AI is already inside your current tools — the question isn’t adoption, it’s whether your workflow is ready to benefit

- Real-time analysis, AI crate building, generative stems, performance intelligence, content automation — five layers, each compounding the one before it

- Clean metadata and structured tagging are the prerequisite for every AI capability above them

- Post-gig data logging builds the personal performance dataset that future tools will actually analyze

- Content infrastructure built around AI multiplies output without multiplying hours

- The threat isn’t AI replacing DJs — it’s operators with systems replacing performers without them

One thing to do today: Export your Rekordbox library as a CSV. Open it. Look at it as a dataset — columns, patterns, gaps — not a playlist. That one perspective shift is where all of this starts.

The DJing.ai newsletter goes one layer deeper every week — one workflow, one system, one practical application of AI for DJs who want to operate with more precision and less wasted time. No filler. No recycled takes. [Subscribe and get the next system in your inbox.]